AgentRace: Benchmarking Efficiency

in LLM Agent Frameworks

The first benchmark specifically designed to systematically evaluate the efficiency of LLM agent frameworks across representative workloads.

Explore the core architecture and pipeline design below.

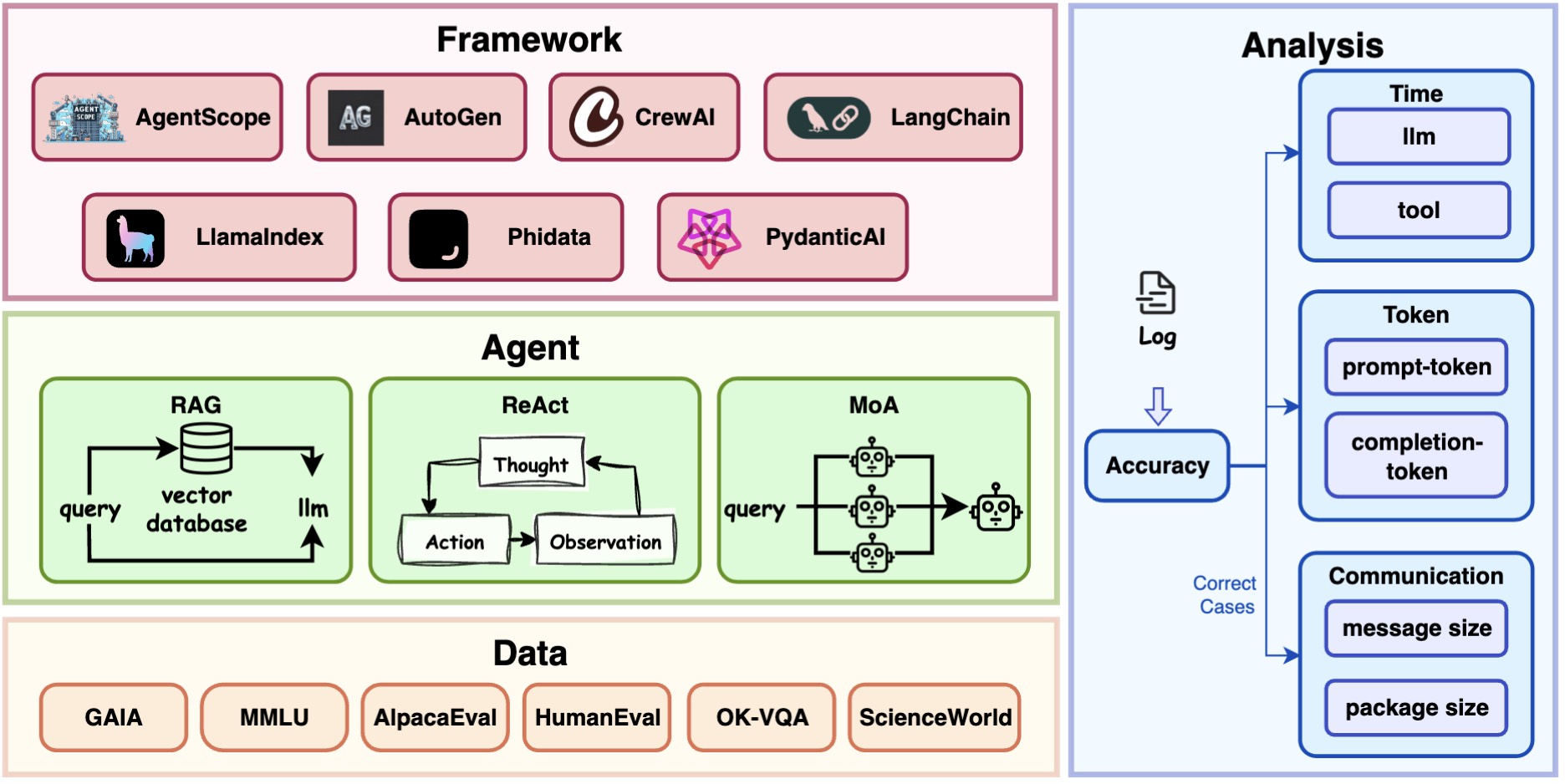

The Architecture of AgentRace

AgentRace comprises four interconnected modules, including Data, Agent, Framework, and Analysis, designed to capture diverse agent frameworks, execution workflows, task complexities, and performance analysis.

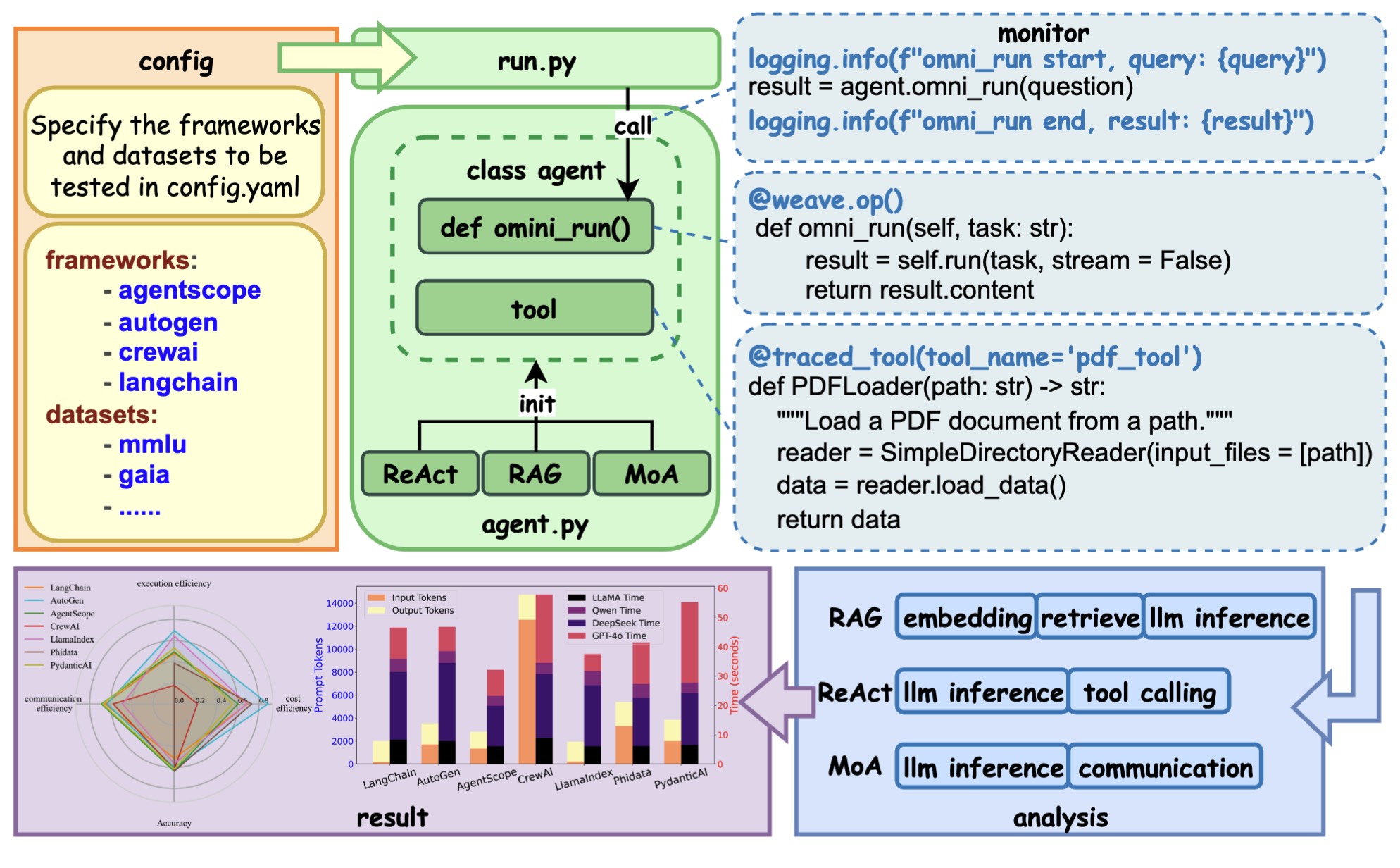

The Pipeline of AgentRace

The pipeline is fully modular and consists of three main stages: (1) configuration, (2) execution and monitoring, and (3) analysis and visualization.

● In the configuration stage, users specify experimental parameters (e.g., framework, workflow, dataset, and tools) in a YAML file.

● The executor parses this file and instantiates the corresponding agent with unified interfaces. During execution, the agent interacts with the chosen framework and tools under controlled settings, while a monitoring layer is dynamically attached to capture runtime behavior.

● Finally, the analysis stage aggregates the collected traces into structured logs and performance visualizations for reproducibility and cross-framework comparison.